はじめに

「Hey Siri」や「OK, Google」のように、特定のワードをきっかけにシステムを起動する「ウェイクアップワード」。

例えば、AI音声対話アプリを作る際に起動フラグとして仕込んでおけば、声を掛けるまでは勝手にAIが反応しないため非常に便利です。

今回は、無料で手軽に使える Picovoice (Porcupine) を使い、Unityでウェイクアップワードを実装する方法を解説します。

後から色々なプラットフォーム(Windows, Android, iOSなど)向けに流用しやすいよう、専用SDKではなく 共通のC APIをUnityから直接呼ぶ方式 を採用しました。

開発環境

Unity 6.3

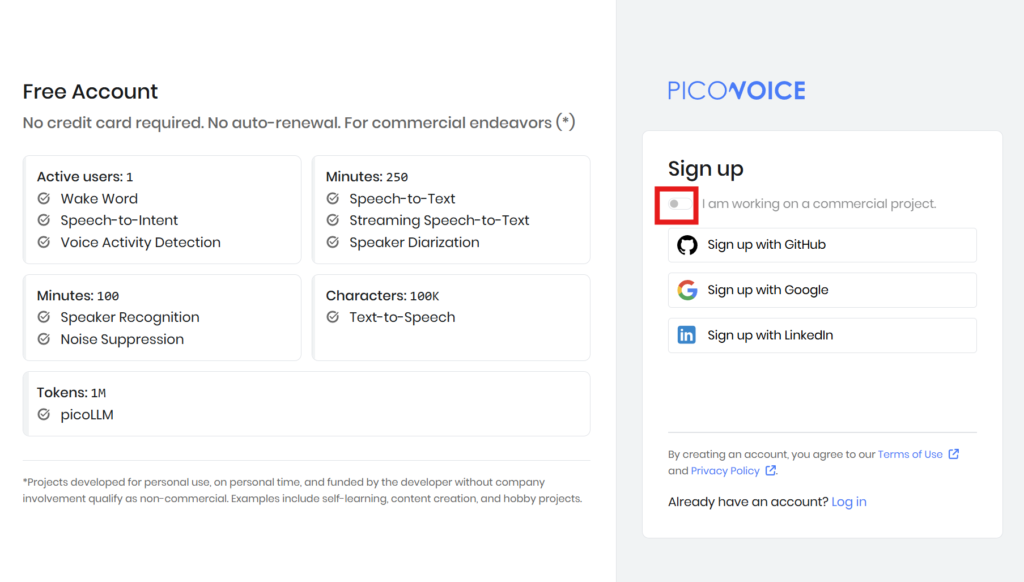

無料でできる範囲(Picovoice Free Plan)

Picovoiceは、非商用の個人プロジェクトであれば無料で利用できます。主な制限は以下の通りです。

テスト運用や、個人の開発用途であれば十分に活用できます。

- カスタムウェイクワード作成: 1ヶ月あたり 1モデル(1ワード)まで

- 月間アクティブユーザー (MAU): 1ユーザー まで

- 注意点: ダウンロードするカスタムウェイクワード(

.ppnファイル)は、「1つのプラットフォーム(ビルド環境)」につき1モデルとカウントされます。

実装手順

1. ウェイクワードモデルの作成

Picovoice Consoleに登録し、非商用アカウントを作成します。

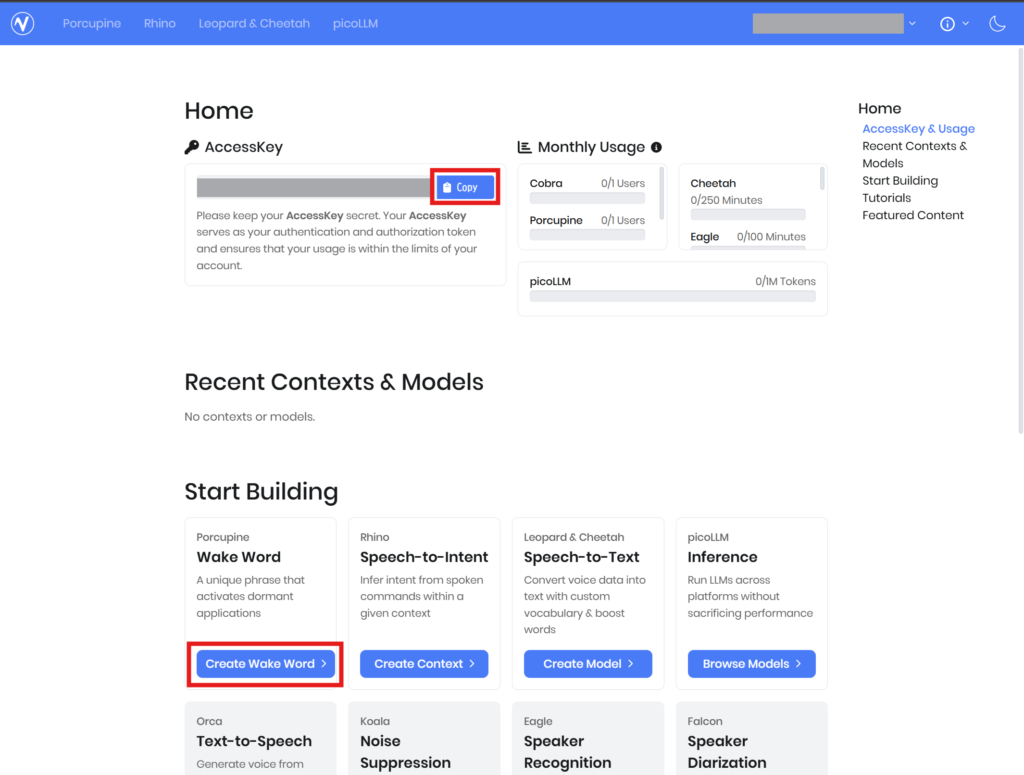

アクセスキーをコピーして控えておき、コンソール画面のメニューから Porcupine を開きます。

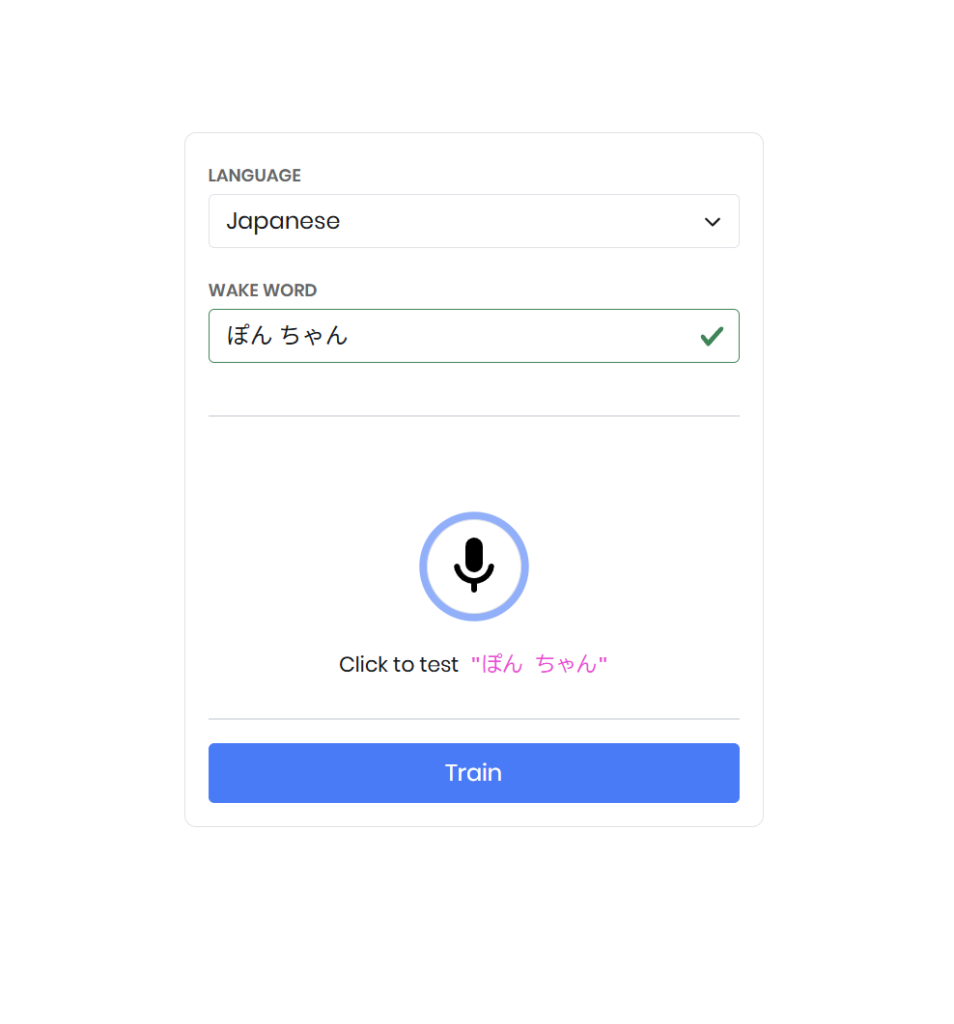

好きな言語(日本語など)を選択し、反応させたいワードを入力します。マイクテストで意図通りに反応するかチェック後、「Train」を押します。

※私が試した際、「ぽんちゃん」は不可、「ぽん ちゃん」は可能でした。単語の間に半角スペースを入れるといいのかも?

ターゲットのPlatform(Android, Windowsなど)を選んで、カスタムWake Wordファイルをダウンロードします。

※中にある [YourFileName].ppn を後で使用します。

2. 公式リポジトリからライブラリを取得

Porcupineの公式GitHubリポジトリを開き、Code → Download ZIP から porcupine-master.zip をダウンロードして解凍します。

https://github.com/Picovoice/porcupine

解凍したフォルダから、以下の必要なファイルをコピーして分かりやすい場所に置いておきます。

【必須】言語パラメータファイル

porcupine-master\lib\common\porcupine_params_ja.pv

(※言語に合わせて選びます。英語ならporcupine_params.pv)

【任意】各Platform向けのライブラリ

対応したい環境のものを取得します。

- Android:

porcupine-master/lib/android/arm64-v8a/libpv_porcupine.so - Windows:

porcupine-master/lib/windows/amd64/libpv_porcupine.dllとporcupine-master/lib/windows/amd64/pv_ypu_impl_cuda_porcupine.dll - iOS:

porcupine-master/lib/ios/PvPorcupine.xcframework(※フォルダごと使用)

3. Unityプロジェクトへの配置

Unityプロジェクト内に、カスタムWake Wordファイルやライブラリのファイルを配置していきます。

以下のパス構成になるようにフォルダを作成して入れてください。

(必須)

Assets/

StreamingAssets/

pv/

porcupine_params_ja.pv (言語パラメータ)

[YourFileName].ppn (カスタムWake Wordファイル)

(Windows向け)

Plugins/

x86_64/

libpv_porcupine.dll

pv_ypu_impl_cuda_porcupine.dll

(Android向け)

Plugins/

Android/

libs/

arm64-v8a/

libpv_porcupine.so

(iOS向け)

Plugins/

iOS/

PvPorcupine.xcframework

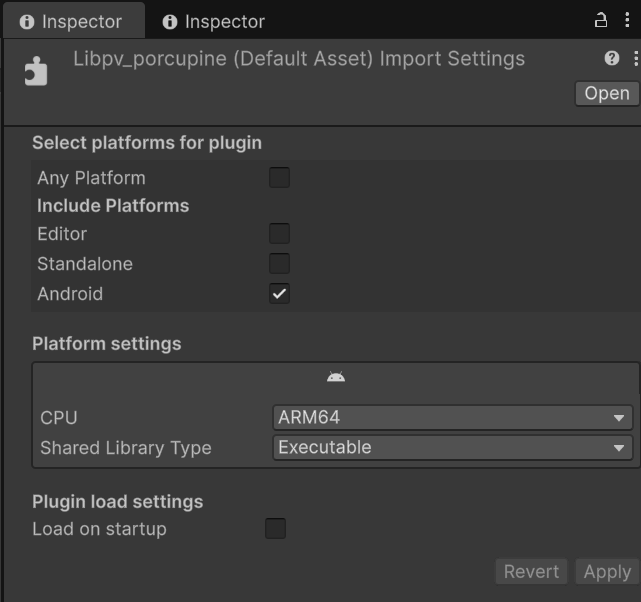

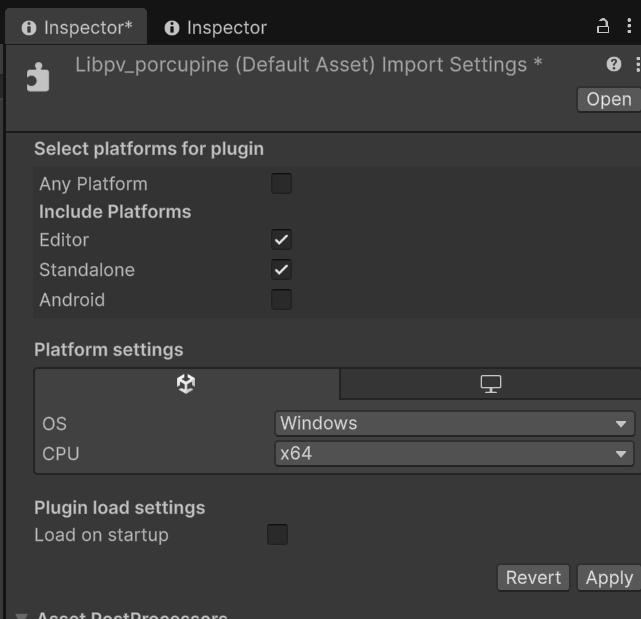

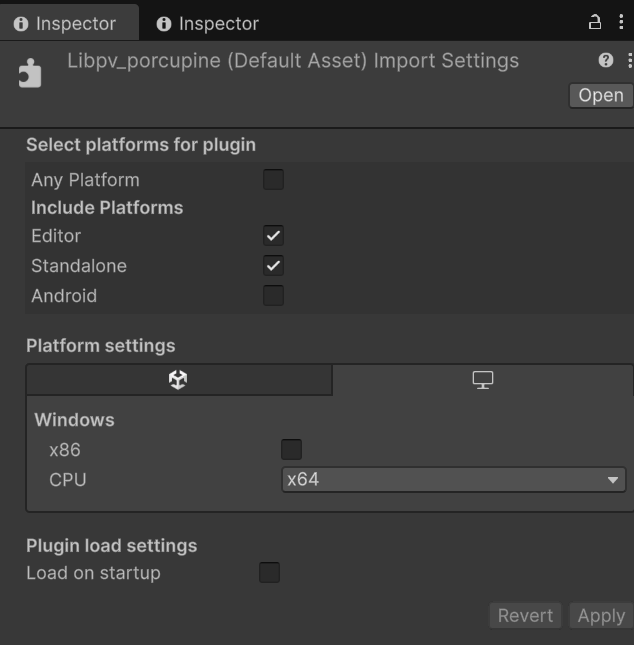

4. Pluginsを設定

さきほどPluginsに配置した各プラットフォーム向けのライブラリのImportSettingsを整えます。動作させたい環境に合うように設定してください。

▼Androidの設定例

▼Windowsの設定例

5. プログラムの実装

あとはプログラムを組むだけです!

以下のScriptを作成します。

WakeWordController : シーンに配置し、パーミッション要求・初期化・検出ループを統括するコントローラMicrophoneFrameSource : Unity の Microphone API から音声を取得し、Porcupine が処理できる PCM フレームに変換するクラスPorcupineWakeWordEngine : Porcupine のネイティブライブラリを P/Invoke で呼び出し、1フレーム単位で Wake Word 判定を行うラッパーWakeWordEventTest : 検出イベントの動作確認用。検知時に指定オブジェクトを一定時間表示する

シーン上に空のGameObjectを用意して、 WakeWordListener とWakeWordEventTestをアタッチし、インスペクタを埋めてビルドしてお試してください。

※以下のサンプルコードはAndroid向けです。要件に合わせて改造して使ってください。

using System;

using System.Collections;

using System.IO;

using UnityEngine;

using UnityEngine.Android;

using UnityEngine.Networking;

/// <summary>

/// Wake Word 検出のシーン側コントローラー

/// Android 専用の実装

/// </summary>

public sealed class WakeWordController : MonoBehaviour

{

private const int k_maxFramesPerUpdate = 8;

private const float k_androidPermissionWaitTimeoutSeconds = 10.0f;

[Header("Start")]

[SerializeField] private bool _autoStartListening = true;

[Header("Picovoice")]

[SerializeField] private string _accessKey = string.Empty;

[SerializeField] private float _sensitivity = 0.65f;

[Header("StreamingAssets Relative Paths")]

[SerializeField] private string _modelRelativePath = "pv/porcupine_params_ja.pv";

[SerializeField] private string _keywordRelativePath = "pv/[YourFileName].ppn";

[Header("Microphone (Optional)")]

[SerializeField] private string _microphoneDeviceName = string.Empty;

[SerializeField] private int _microphoneBufferSeconds = 1;

private Coroutine _startListeningCoroutine;

private PorcupineWakeWordEngine _wakeWordEngine;

private MicrophoneFrameSource _microphoneFrameSource;

private short[] _processingFrameBuffer;

private bool _isListening;

private bool _isStarting;

/// <summary>

/// Wake Word が検出されたときに発火

/// </summary>

public event Action WakeWordDetectedEvent;

/// <summary>

/// 現在、聞き取り状態に入っているかを返す

/// </summary>

public bool IsListening

{

get { return _isListening; }

}

private void Start()

{

if (_autoStartListening)

{

StartListening();

}

}

private void Update()

{

if (!_isListening)

{

return;

}

if (_wakeWordEngine == null || _microphoneFrameSource == null || _processingFrameBuffer == null)

{

return;

}

try

{

int processedFrameCount = 0;

while (processedFrameCount < k_maxFramesPerUpdate &&

_microphoneFrameSource.TryReadFrame(_processingFrameBuffer))

{

bool isDetected = _wakeWordEngine.ProcessFrame(_processingFrameBuffer);

if (isDetected)

{

Debug.Log($"{nameof(WakeWordController)}: Wake word detected.");

RaiseWakeWordDetectedEvent();

}

processedFrameCount++;

}

}

catch (Exception exception)

{

Debug.LogError($"{nameof(WakeWordController)}: Failed while processing microphone frames. {exception}");

StopListening();

}

}

private void OnDisable()

{

StopListening();

}

/// <summary>

/// Wake Word の聞き取りを開始する

/// すでに開始済みまたは開始処理中の場合は何もしない

/// </summary>

public void StartListening()

{

if (_isListening || _isStarting)

{

Debug.Log($"{nameof(WakeWordController)}: StartListening was ignored because listener is already active.");

return;

}

_startListeningCoroutine = StartCoroutine(StartListeningCoroutine());

}

/// <summary>

/// Wake Word の聞き取りを停止する

/// </summary>

public void StopListening()

{

if (_startListeningCoroutine != null)

{

StopCoroutine(_startListeningCoroutine);

_startListeningCoroutine = null;

}

_isStarting = false;

_isListening = false;

if (_microphoneFrameSource != null)

{

try

{

_microphoneFrameSource.Dispose();

}

catch (Exception exception)

{

Debug.LogError($"{nameof(WakeWordController)}: Failed to dispose microphone frame source. {exception}");

}

_microphoneFrameSource = null;

}

if (_wakeWordEngine != null)

{

try

{

_wakeWordEngine.Dispose();

}

catch (Exception exception)

{

Debug.LogError($"{nameof(WakeWordController)}: Failed to dispose wake word engine. {exception}");

}

_wakeWordEngine = null;

}

_processingFrameBuffer = null;

}

private IEnumerator StartListeningCoroutine()

{

_isStarting = true;

if (string.IsNullOrWhiteSpace(_accessKey))

{

Debug.LogError($"{nameof(WakeWordController)}: AccessKey is empty.");

_isStarting = false;

yield break;

}

// Android マイクパーミッション要求

yield return RequestAndroidMicrophonePermissionCoroutine();

if (!Permission.HasUserAuthorizedPermission(Permission.Microphone))

{

Debug.LogError($"{nameof(WakeWordController)}: Microphone permission was denied.");

_isStarting = false;

yield break;

}

string modelFilePath = null;

string keywordFilePath = null;

yield return ResolveReadableFilePathCoroutine(_modelRelativePath, resolvedPath => modelFilePath = resolvedPath);

if (string.IsNullOrEmpty(modelFilePath))

{

Debug.LogError($"{nameof(WakeWordController)}: Failed to resolve model file path.");

_isStarting = false;

yield break;

}

yield return ResolveReadableFilePathCoroutine(_keywordRelativePath, resolvedPath => keywordFilePath = resolvedPath);

if (string.IsNullOrEmpty(keywordFilePath))

{

Debug.LogError($"{nameof(WakeWordController)}: Failed to resolve keyword file path.");

_isStarting = false;

yield break;

}

try

{

_wakeWordEngine = new PorcupineWakeWordEngine();

_wakeWordEngine.Initialize(

_accessKey,

modelFilePath,

keywordFilePath,

_sensitivity);

_microphoneFrameSource = new MicrophoneFrameSource(

_wakeWordEngine.SampleRate,

_wakeWordEngine.FrameLength,

_microphoneDeviceName,

_microphoneBufferSeconds);

_microphoneFrameSource.StartCapture();

_processingFrameBuffer = new short[_wakeWordEngine.FrameLength];

_isListening = true;

Debug.Log($"{nameof(WakeWordController)}: Listening started.");

}

catch (Exception exception)

{

Debug.LogError($"{nameof(WakeWordController)}: Failed to start listening. {exception}");

StopListening();

}

finally

{

_isStarting = false;

_startListeningCoroutine = null;

}

}

private void RaiseWakeWordDetectedEvent()

{

try

{

WakeWordDetectedEvent?.Invoke();

}

catch (Exception exception)

{

Debug.LogError($"{nameof(WakeWordController)}: WakeWordDetectedEvent invocation failed. {exception}");

}

}

/// <summary>

/// StreamingAssets 内のファイルを persistentDataPath にコピーし、読み取り可能なパスを返す

/// Android では StreamingAssets は APK 内に含まれるため、直接アクセスできない

/// </summary>

private IEnumerator ResolveReadableFilePathCoroutine(string relativePath, Action<string> onCompleted)

{

if (string.IsNullOrWhiteSpace(relativePath))

{

Debug.LogError($"{nameof(WakeWordController)}: Relative path is empty.");

onCompleted?.Invoke(null);

yield break;

}

string sourcePath = Path.Combine(Application.streamingAssetsPath, relativePath);

string destinationPath = Path.Combine(Application.persistentDataPath, relativePath);

string destinationDirectoryPath = Path.GetDirectoryName(destinationPath);

if (string.IsNullOrEmpty(destinationDirectoryPath))

{

Debug.LogError($"{nameof(WakeWordController)}: Failed to resolve destination directory path.");

onCompleted?.Invoke(null);

yield break;

}

try

{

if (!Directory.Exists(destinationDirectoryPath))

{

Directory.CreateDirectory(destinationDirectoryPath);

}

}

catch (Exception exception)

{

Debug.LogError($"{nameof(WakeWordController)}: Failed to create destination directory. {exception}");

onCompleted?.Invoke(null);

yield break;

}

using (UnityWebRequest request = UnityWebRequest.Get(sourcePath))

{

yield return request.SendWebRequest();

if (request.result != UnityWebRequest.Result.Success)

{

Debug.LogError($"{nameof(WakeWordController)}: Failed to read StreamingAssets file. Path={sourcePath}, Error={request.error}");

onCompleted?.Invoke(null);

yield break;

}

try

{

File.WriteAllBytes(destinationPath, request.downloadHandler.data);

}

catch (Exception exception)

{

Debug.LogError($"{nameof(WakeWordController)}: Failed to write file to persistentDataPath. {exception}");

onCompleted?.Invoke(null);

yield break;

}

}

onCompleted?.Invoke(destinationPath);

}

/// <summary>

/// Android マイクパーミッションを要求し、許可されるまで待機する

/// </summary>

private IEnumerator RequestAndroidMicrophonePermissionCoroutine()

{

if (Permission.HasUserAuthorizedPermission(Permission.Microphone))

{

yield break;

}

Permission.RequestUserPermission(Permission.Microphone);

float startTime = Time.unscaledTime;

while (!Permission.HasUserAuthorizedPermission(Permission.Microphone))

{

if (Time.unscaledTime - startTime > k_androidPermissionWaitTimeoutSeconds)

{

yield break;

}

yield return null;

}

}

}

using System;

using System.Collections.Generic;

using UnityEngine;

/// <summary>

/// Unity の Microphone API から、Porcupine が要求する 1フレーム分の PCM(short[]) を供給

/// </summary>

public sealed class MicrophoneFrameSource : IDisposable

{

private readonly int _targetSampleRate;

private readonly int _frameLength;

private readonly string _requestedDeviceName;

private readonly int _bufferSeconds;

private readonly Queue<short> _pendingSamples = new Queue<short>();

private AudioClip _microphoneClip;

private string _resolvedDeviceName;

private float[] _sampleScratchBuffer;

private int _lastReadSamplePosition;

private int _channelCount;

private bool _isCapturing;

// リサンプリング用フィールド

private int _captureSampleRate;

private bool _needsResampling;

private int _resampleAccumulator;

private float _resampleSampleSum;

private int _resampleInputCount;

/// <summary>

/// 現在キャプチャ中かどうかを返す

/// </summary>

public bool IsCapturing

{

get { return _isCapturing; }

}

/// <summary>

/// Porcupine が要求するサンプルレートでフレームを生成するソースを作成

/// </summary>

public MicrophoneFrameSource(

int targetSampleRate,

int frameLength,

string requestedDeviceName,

int bufferSeconds)

{

if (targetSampleRate <= 0)

{

throw new ArgumentOutOfRangeException(nameof(targetSampleRate));

}

if (frameLength <= 0)

{

throw new ArgumentOutOfRangeException(nameof(frameLength));

}

if (bufferSeconds <= 0)

{

throw new ArgumentOutOfRangeException(nameof(bufferSeconds));

}

_targetSampleRate = targetSampleRate;

_frameLength = frameLength;

_requestedDeviceName = requestedDeviceName;

_bufferSeconds = bufferSeconds;

}

/// <summary>

/// マイクキャプチャを開始

/// </summary>

public void StartCapture()

{

if (_isCapturing)

{

Debug.Log($"{nameof(MicrophoneFrameSource)}: StartCapture was ignored because capture is already active.");

return;

}

string[] microphoneDevices = Microphone.devices;

if (microphoneDevices == null || microphoneDevices.Length == 0)

{

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: No microphone devices were found.");

}

_resolvedDeviceName = ResolveDeviceName(microphoneDevices);

_captureSampleRate = DetermineCaptureSampleRate(_resolvedDeviceName, _targetSampleRate);

_needsResampling = _captureSampleRate != _targetSampleRate;

_microphoneClip = Microphone.Start(

_resolvedDeviceName,

true,

_bufferSeconds,

_captureSampleRate);

if (_microphoneClip == null)

{

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: Microphone.Start returned null.");

}

// マイクの録音開始を待機(フレーム更新が必要なため短いスリープを挟む)

const int k_micStartPollIntervalMs = 10;

const float k_micStartTimeoutSeconds = 5.0f;

float micStartElapsed = 0.0f;

while (Microphone.GetPosition(_resolvedDeviceName) <= 0)

{

System.Threading.Thread.Sleep(k_micStartPollIntervalMs);

micStartElapsed += k_micStartPollIntervalMs / 1000.0f;

if (micStartElapsed >= k_micStartTimeoutSeconds)

{

StopCapture();

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: Microphone did not start within {k_micStartTimeoutSeconds}s.");

}

}

if (_microphoneClip.frequency != _captureSampleRate)

{

StopCapture();

throw new InvalidOperationException(

$"{nameof(MicrophoneFrameSource)}: Unexpected microphone sample rate. Expected={_captureSampleRate}, Actual={_microphoneClip.frequency}");

}

_channelCount = _microphoneClip.channels;

if (_channelCount <= 0)

{

StopCapture();

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: Invalid microphone channel count.");

}

_sampleScratchBuffer = new float[_microphoneClip.samples * _channelCount];

_pendingSamples.Clear();

_lastReadSamplePosition = Microphone.GetPosition(_resolvedDeviceName);

_resampleAccumulator = 0;

_resampleSampleSum = 0.0f;

_resampleInputCount = 0;

_isCapturing = true;

Debug.Log($"{nameof(MicrophoneFrameSource)}: Capture started. Device={_resolvedDeviceName}, CaptureRate={_captureSampleRate}, TargetRate={_targetSampleRate}, Resampling={_needsResampling}, Channels={_channelCount}");

}

/// <summary>

/// マイクキャプチャを停止

/// </summary>

public void StopCapture()

{

if (!_isCapturing)

{

return;

}

try

{

Microphone.End(_resolvedDeviceName);

}

catch (Exception exception)

{

Debug.LogError($"{nameof(MicrophoneFrameSource)}: Failed to stop microphone. {exception}");

}

_microphoneClip = null;

_sampleScratchBuffer = null;

_pendingSamples.Clear();

_lastReadSamplePosition = 0;

_channelCount = 0;

_captureSampleRate = 0;

_needsResampling = false;

_resampleAccumulator = 0;

_resampleSampleSum = 0.0f;

_resampleInputCount = 0;

_isCapturing = false;

}

/// <summary>

/// 1フレーム分の PCM を取得できた場合に destinationBuffer に書き込む

/// </summary>

public bool TryReadFrame(short[] destinationBuffer)

{

if (destinationBuffer == null)

{

throw new ArgumentNullException(nameof(destinationBuffer));

}

if (destinationBuffer.Length != _frameLength)

{

throw new ArgumentException($"{nameof(MicrophoneFrameSource)}: Destination buffer length does not match frame length.");

}

if (!_isCapturing)

{

return false;

}

PumpMicrophoneSamples();

if (_pendingSamples.Count < _frameLength)

{

return false;

}

for (int index = 0; index < _frameLength; index++)

{

destinationBuffer[index] = _pendingSamples.Dequeue();

}

return true;

}

public void Dispose()

{

StopCapture();

}

private string ResolveDeviceName(string[] microphoneDevices)

{

if (string.IsNullOrWhiteSpace(_requestedDeviceName))

{

return microphoneDevices[0];

}

foreach (string microphoneDevice in microphoneDevices)

{

if (string.Equals(microphoneDevice, _requestedDeviceName, StringComparison.Ordinal))

{

return microphoneDevice;

}

}

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: Requested microphone device was not found. Device={_requestedDeviceName}");

}

/// <summary>

/// マイクデバイスの対応レートを確認し、実際に使用する録音レートを決定

/// ターゲットレートが非対応の場合、デバイスのネイティブレートを返す

/// </summary>

private int DetermineCaptureSampleRate(string deviceName, int targetSampleRate)

{

try

{

Microphone.GetDeviceCaps(deviceName, out int minFrequency, out int maxFrequency);

// (0, 0) は任意レート対応(Android で一般的)

bool isAnyRateSupported = minFrequency == 0 && maxFrequency == 0;

if (isAnyRateSupported)

{

return targetSampleRate;

}

// ターゲットレートがサポート範囲内

bool isTargetSupported = targetSampleRate >= minFrequency && targetSampleRate <= maxFrequency;

if (isTargetSupported)

{

return targetSampleRate;

}

// 非対応の場合はデバイスのネイティブレートを使用

int nativeRate = minFrequency > 0 ? minFrequency : maxFrequency;

Debug.Log($"{nameof(MicrophoneFrameSource)}: Target sample rate {targetSampleRate}Hz is not supported by device. Using native rate {nativeRate}Hz with resampling. Device={deviceName}, Min={minFrequency}, Max={maxFrequency}");

return nativeRate;

}

catch (Exception exception)

{

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: Failed to query microphone capabilities.", exception);

}

}

private void PumpMicrophoneSamples()

{

if (_microphoneClip == null)

{

return;

}

int currentSamplePosition = Microphone.GetPosition(_resolvedDeviceName);

if (currentSamplePosition < 0)

{

return;

}

int availableSampleCount = currentSamplePosition - _lastReadSamplePosition;

if (availableSampleCount < 0)

{

availableSampleCount += _microphoneClip.samples;

}

if (availableSampleCount <= 0)

{

return;

}

int firstSegmentSampleCount = Math.Min(availableSampleCount, _microphoneClip.samples - _lastReadSamplePosition);

if (firstSegmentSampleCount > 0)

{

ReadClipSegmentIntoQueue(_lastReadSamplePosition, firstSegmentSampleCount);

}

int secondSegmentSampleCount = availableSampleCount - firstSegmentSampleCount;

if (secondSegmentSampleCount > 0)

{

ReadClipSegmentIntoQueue(0, secondSegmentSampleCount);

}

_lastReadSamplePosition = currentSamplePosition;

}

private void ReadClipSegmentIntoQueue(int offsetSample, int sampleCount)

{

if (_microphoneClip == null || _sampleScratchBuffer == null)

{

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: Microphone clip is not ready.");

}

bool readSucceeded;

try

{

readSucceeded = _microphoneClip.GetData(_sampleScratchBuffer, offsetSample);

}

catch (Exception exception)

{

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: Failed to read microphone audio data.", exception);

}

if (!readSucceeded)

{

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: AudioClip.GetData returned false.");

}

int sampleValueCount = sampleCount * _channelCount;

if (sampleValueCount <= 0)

{

throw new InvalidOperationException($"{nameof(MicrophoneFrameSource)}: Invalid read sample count.");

}

for (int sampleIndex = 0; sampleIndex < sampleCount; sampleIndex++)

{

float monoSample = ConvertToMono(_sampleScratchBuffer, sampleIndex, _channelCount);

if (!_needsResampling)

{

// リサンプリング不要:そのままキューに追加

_pendingSamples.Enqueue(ConvertFloatToInt16(monoSample));

}

else

{

// Bresenham 方式でダウンサンプリング

_resampleSampleSum += monoSample;

_resampleInputCount++;

_resampleAccumulator += _targetSampleRate;

if (_resampleAccumulator >= _captureSampleRate)

{

float averagedSample = _resampleSampleSum / _resampleInputCount;

_pendingSamples.Enqueue(ConvertFloatToInt16(averagedSample));

_resampleAccumulator -= _captureSampleRate;

_resampleSampleSum = 0.0f;

_resampleInputCount = 0;

}

}

}

}

private static float ConvertToMono(float[] interleavedSamples, int sampleIndex, int channelCount)

{

float summedSample = 0.0f;

int baseIndex = sampleIndex * channelCount;

for (int channelIndex = 0; channelIndex < channelCount; channelIndex++)

{

summedSample += interleavedSamples[baseIndex + channelIndex];

}

return summedSample / channelCount;

}

private static short ConvertFloatToInt16(float sample)

{

float clampedSample = Mathf.Clamp(sample, -1.0f, 1.0f);

int intSample = Mathf.RoundToInt(clampedSample * short.MaxValue);

intSample = Mathf.Clamp(intSample, short.MinValue, short.MaxValue);

return (short)intSample;

}

}

using System;

using System.IO;

using System.Runtime.InteropServices;

using UnityEngine;

/// <summary>

/// Porcupine ネイティブライブラリの薄いラッパー

/// </summary>

public sealed class PorcupineWakeWordEngine : IDisposable

{

private const string k_defaultDevice = "best";

private IntPtr _handle = IntPtr.Zero;

/// <summary>

/// Porcupine が要求するサンプルレートを返す

/// </summary>

public int SampleRate

{

get { return NativeMethods.pv_sample_rate(); }

}

/// <summary>

/// Porcupine が要求するフレーム長を返す

/// </summary>

public int FrameLength

{

get { return NativeMethods.pv_porcupine_frame_length(); }

}

/// <summary>

/// ネイティブハンドルが初期化済みかを返す

/// </summary>

public bool IsInitialized

{

get { return _handle != IntPtr.Zero; }

}

/// <summary>

/// Porcupine を初期化する

/// </summary>

public void Initialize(

string accessKey,

string modelFilePath,

string keywordFilePath,

float sensitivity)

{

if (string.IsNullOrWhiteSpace(accessKey))

{

throw new ArgumentException($"{nameof(PorcupineWakeWordEngine)}: AccessKey is empty.", nameof(accessKey));

}

if (string.IsNullOrWhiteSpace(modelFilePath))

{

throw new ArgumentException($"{nameof(PorcupineWakeWordEngine)}: Model file path is empty.", nameof(modelFilePath));

}

if (string.IsNullOrWhiteSpace(keywordFilePath))

{

throw new ArgumentException($"{nameof(PorcupineWakeWordEngine)}: Keyword file path is empty.", nameof(keywordFilePath));

}

if (!File.Exists(modelFilePath))

{

throw new FileNotFoundException($"{nameof(PorcupineWakeWordEngine)}: Model file was not found.", modelFilePath);

}

if (!File.Exists(keywordFilePath))

{

throw new FileNotFoundException($"{nameof(PorcupineWakeWordEngine)}: Keyword file was not found.", keywordFilePath);

}

if (sensitivity < 0.0f || sensitivity > 1.0f)

{

throw new ArgumentOutOfRangeException(nameof(sensitivity), $"{nameof(PorcupineWakeWordEngine)}: Sensitivity must be in range [0, 1].");

}

Dispose();

IntPtr keywordPathPointer = IntPtr.Zero;

IntPtr keywordPathArrayPointer = IntPtr.Zero;

IntPtr sensitivityArrayPointer = IntPtr.Zero;

try

{

keywordPathPointer = Marshal.StringToHGlobalAnsi(keywordFilePath);

keywordPathArrayPointer = Marshal.AllocHGlobal(IntPtr.Size);

Marshal.WriteIntPtr(keywordPathArrayPointer, keywordPathPointer);

sensitivityArrayPointer = Marshal.AllocHGlobal(sizeof(float));

Marshal.Copy(new float[] { sensitivity }, 0, sensitivityArrayPointer, 1);

PvStatus status = NativeMethods.pv_porcupine_init(

accessKey,

modelFilePath,

k_defaultDevice,

1,

keywordPathArrayPointer,

sensitivityArrayPointer,

out _handle);

if (status != PvStatus.Success)

{

string statusMessage = NativeMethods.GetStatusString(status);

throw new InvalidOperationException(

$"{nameof(PorcupineWakeWordEngine)}: pv_porcupine_init failed. Status={status}, Message={statusMessage}");

}

Debug.Log($"{nameof(PorcupineWakeWordEngine)}: Initialized. Version={Version}, SampleRate={SampleRate}, FrameLength={FrameLength}");

}

catch (DllNotFoundException exception)

{

throw new InvalidOperationException($"{nameof(PorcupineWakeWordEngine)}: Porcupine native library was not found.", exception);

}

catch (EntryPointNotFoundException exception)

{

throw new InvalidOperationException($"{nameof(PorcupineWakeWordEngine)}: Porcupine native entry point was not found.", exception);

}

finally

{

if (sensitivityArrayPointer != IntPtr.Zero)

{

Marshal.FreeHGlobal(sensitivityArrayPointer);

}

if (keywordPathArrayPointer != IntPtr.Zero)

{

Marshal.FreeHGlobal(keywordPathArrayPointer);

}

if (keywordPathPointer != IntPtr.Zero)

{

Marshal.FreeHGlobal(keywordPathPointer);

}

}

}

/// <summary>

/// 1フレーム分の PCM を処理し、Wake Word が検出されたら true を返す

/// </summary>

public bool ProcessFrame(short[] frame)

{

if (!IsInitialized)

{

throw new InvalidOperationException($"{nameof(PorcupineWakeWordEngine)}: Engine is not initialized.");

}

if (frame == null)

{

throw new ArgumentNullException(nameof(frame));

}

if (frame.Length != FrameLength)

{

throw new ArgumentException($"{nameof(PorcupineWakeWordEngine)}: Frame length does not match Porcupine frame length.");

}

PvStatus status = NativeMethods.pv_porcupine_process(_handle, frame, out int keywordIndex);

if (status != PvStatus.Success)

{

string statusMessage = NativeMethods.GetStatusString(status);

throw new InvalidOperationException(

$"{nameof(PorcupineWakeWordEngine)}: pv_porcupine_process failed. Status={status}, Message={statusMessage}");

}

return keywordIndex >= 0;

}

/// <summary>

/// ネイティブリソースを破棄する

/// </summary>

public void Dispose()

{

if (_handle == IntPtr.Zero)

{

return;

}

try

{

NativeMethods.pv_porcupine_delete(_handle);

}

finally

{

_handle = IntPtr.Zero;

}

}

private string Version

{

get

{

IntPtr versionPointer = NativeMethods.pv_porcupine_version();

if (versionPointer == IntPtr.Zero)

{

return "unknown";

}

return Marshal.PtrToStringAnsi(versionPointer) ?? "unknown";

}

}

private enum PvStatus

{

Success = 0,

OutOfMemory = 1,

IoError = 2,

InvalidArgument = 3,

StopIteration = 4,

KeyError = 5,

InvalidState = 6,

RuntimeError = 7,

ActivationError = 8,

ActivationLimitReached = 9,

ActivationThrottled = 10,

ActivationRefused = 11,

}

private static class NativeMethods

{

private const string k_libraryName = "pv_porcupine";

[DllImport(k_libraryName)]

public static extern int pv_sample_rate();

[DllImport(k_libraryName)]

public static extern int pv_porcupine_frame_length();

[DllImport(k_libraryName)]

public static extern IntPtr pv_porcupine_version();

[DllImport(k_libraryName)]

public static extern IntPtr pv_status_to_string(PvStatus status);

[DllImport(k_libraryName)]

public static extern PvStatus pv_porcupine_init(

[MarshalAs(UnmanagedType.LPStr)] string accessKey,

[MarshalAs(UnmanagedType.LPStr)] string modelPath,

[MarshalAs(UnmanagedType.LPStr)] string device,

int numKeywords,

IntPtr keywordPaths,

IntPtr sensitivities,

out IntPtr porcupineHandle);

[DllImport(k_libraryName)]

public static extern void pv_porcupine_delete(IntPtr porcupineHandle);

[DllImport(k_libraryName)]

public static extern PvStatus pv_porcupine_process(

IntPtr porcupineHandle,

[In] short[] pcm,

out int keywordIndex);

public static string GetStatusString(PvStatus status)

{

IntPtr statusPointer = pv_status_to_string(status);

if (statusPointer == IntPtr.Zero)

{

return "unknown";

}

string statusString = Marshal.PtrToStringAnsi(statusPointer);

return string.IsNullOrEmpty(statusString) ? "unknown" : statusString;

}

}

}

using System;

using System.Collections;

using UnityEngine;

/// <summary>

/// Wake Word の検出イベントをテストするためのクラス

/// 検知時に指定した GameObject をアクティブにし、一定時間後に非アクティブにする

/// </summary>

public sealed class WakeWordEventTest : MonoBehaviour

{

private const float k_displayDurationSeconds = 1.0f;

[SerializeField]

private WakeWordController _wakeWordController;

[SerializeField]

private GameObject _targetObject;

private Coroutine _showCoroutine;

private void OnEnable()

{

if (_wakeWordController != null)

{

_wakeWordController.WakeWordDetectedEvent += OnWakeWordDetected;

}

else

{

Debug.LogWarning($"{nameof(WakeWordEventTest)}: WakeWordController is not assigned.");

}

}

private void OnDisable()

{

if (_wakeWordController != null)

{

_wakeWordController.WakeWordDetectedEvent -= OnWakeWordDetected;

}

StopShowCoroutine();

}

private void OnWakeWordDetected()

{

if (_targetObject == null)

{

Debug.LogWarning($"{nameof(WakeWordEventTest)}: TargetObject is not assigned.");

return;

}

Debug.Log($"{nameof(WakeWordEventTest)}: Wake word detected. Showing target object.");

// 既に表示処理が実行中の場合はキャンセルしてタイマーを再開する

StopShowCoroutine();

_showCoroutine = StartCoroutine(ShowAndHideCoroutine());

}

private IEnumerator ShowAndHideCoroutine()

{

_targetObject.SetActive(true);

yield return new WaitForSeconds(k_displayDurationSeconds);

if (_targetObject != null)

{

_targetObject.SetActive(false);

}

_showCoroutine = null;

}

private void StopShowCoroutine()

{

if (_showCoroutine != null)

{

StopCoroutine(_showCoroutine);

_showCoroutine = null;

}

}

}

おわりに

簡単な上に精度もよく、便利なライブラリですね!

C APIを直接叩くことで、特定のSDKに依存しすぎず、今後のマルチプラットフォーム展開もスムーズに行える設計になりました。音声AI開発のベースとしてぜひ活用してみてください!